Chip MIT reduced the power consumption of the neural network at the 95%

Source:

Source:Neural networks are a powerful thing, but very voracious. Engineers from the Massachusetts Institute of technology (MIT) have managed to develop a new chip which reduces power consumption of the neural network by 95%, which could in theory allow them to work even on mobile devices with batteries. The smartphones today are becoming smarter and smarter, offer more services, more energized artificial intelligence, like virtual assistants and transfers in real time. But usually the neural network processes the data for these services in the cloud, and smartphones only transmit data back and forth.

It's not ideal, because it requires a thick communication channel, and assumes that sensitive data is transmitted and stored beyond the reach of the user. But enormous amounts of energy required to power the neural networks running on GPUs, cannot be achieved in a device that runs on a small battery.

Engineers at MIT have developed a chip that reduces energy consumption by 95%. The chip dramatically reduces the need to transfer data back and forth between memory and processors.

Neural networks are composed of thousands of interconnected artificial neurons, arranged in layers. Each neuron receives input from several neurons in the underlying layer, and if the combined input passes a certain threshold, it transmits the result of multiple neurons above. The strength of connections between neurons is determined by weight, which is set in the learning process.

This means that for each neuron chip has to derive the input data for the specific connection and the weight of the connection from the memory, multiply them, store the result and then repeat the process for each input. A lot of data moving back and forth, spent a lot of energy.

The New chip MIT fixes it, calculating all entries in parallel in the memory with the use of analog circuitry. This significantly reduces the amount of data you want to overtake, leading to significant energy savings.

This approach requires that the weight of the compounds was binary, not a range value, but previous theoretical work has shown that this is not much impact on accuracy and scholars found that the results of the chip is diverged by 2-3% from the usual case of a neural network running on a standard computer.

Not for the first time scientists create chips that handle the processes in memory, reducing the power consumption of the neural network, but the first time this approach has been used to make powerful neural networks, known for his treatment of the images.

"the Results show impressive specifications energy efficient implementation of convolution operations within a memory array," says Dario Gil, Vice President for artificial intelligence at IBM.

"It definitely opens the possibility of using more complex convolutional neural networks to classify images and videos in the Internet of things in the future."

And it's interesting not only to the groups R&D. the Desire to make a AI on devices like smartphones, household appliances and all sorts of IoT devices is pushing many of Silicon valley in the side of the chips with low power consumption.

Apple has integrated his Neural Engine in iPhone X, to power, for example, facial recognition technology, and Amazon is rumored to be developing its own chips AI for the next generation of digital assistants Echo.

Large companies, chip manufacturers also increasingly rely on machine learning, forcing them to make their devices even more efficient. At the beginning of this year, ARM has introduced two new chip: Arm processor Machine Learning working with the objectives of the common AI, from translation, to face recognition, and Object Detection Arm processor that determines, for example, faces in photographs.

Latest mobile chip Qualcomm, Snapdragon 845, has a graphic processor and to a large extent focused on AI. The company also introduced Snapdragon 820E, which should work in drones, robots and industrial devices.

Looking ahead, IBM and Intel are developing neuromorphic chips, the architecture of which is inspired by the human brain and incredible energy efficiency. This could theoretically allow North (IBM) and Loihi (Intel) to conduct a powerful learning machine, using only a small proportion of the energy of conventional chips, but these projects are still purely experimental.

To Make chips that give life to neural nets to save the energy of battery will be very difficult. But at the current rate of innovation is "very difficult" seems quite feasible.

Recommended

Can genes create the perfect diet for you?

Diet on genotype can be a way out for many, but it still has a lot of questions Don't know what to do to lose weight? DNA tests promise to help you with this. They will be able to develop the most individual diet, because for this they will use the m...

How many extraterrestrial civilizations can exist nearby?

If aliens exist, why don't we "hear" them? In the 12th episode of Cosmos, which aired on December 14, 1980, co-author and host Carl Sagan introduced viewers to the same equation of astronomer Frank Drake. Using it, he calculated the potential number ...

Why does the most poisonous plant in the world cause severe pain?

The pain caused to humans by the Gimpi-gympie plant can drive him crazy Many people consider Australia a very dangerous place full of poisonous creatures. And this is a perfectly correct idea, because this continent literally wants to kill everyone w...

Related News

Russia and China will jointly explore the moon and deep space

the Federal space Agency and Chinese national space administration (CNCA) signed an agreement in which both parties intend to work together on space research. The Russian space Agency reported that the documents were signed by rep...

The first experiment at the Collider in Dubna near Moscow began

In the suburban town of Dubna is running the first experiment on newly built by the joint Institute for nuclear research (JINR) ion accelerator complex NICA (NICA, Nuclotron-based Ion Collider fAcility). Reportedly, the device is ...

Touch a loved one has a pain relieving effect

the human Touch is always nice, but other than that, if you believe new research group of scientists from France and Israel, they have a number of very interesting effects. For example, using tactile contact two loved ones sinhron...

Scientists caught the signals from the first stars in the Universe

the Early stage of formation remains largely a mystery to modern science. But in a new study published in the journal Nature, the researchers gave convincing arguments when it began to form the first stars. After the Big Bang that...

Scientists have discovered a new kind of stem cells

If reversing the biological clock of every cell of the human body, it will be the same as one of the varieties . It begins with them the growth of multicellular organisms, stem cells can renew themselves to form new stem cells, di...

Scientists have found that diet affects our emotional state

One of the popular advertising claims: you — not you when you're hungry. However, our mood and emotional state affects not only hunger, but also the one we use. To such conclusion experts of the medical center when rush Univ...

There is life in the driest desert on Earth. So she could be on Mars

When searching for a new potentially habitable planetary bodies astronomers always pay attention to one aspect – the presence or absence of this planetary body of water. They are so used. On Earth there is water, and it is an impo...

Are you ready to get dangerous parasites for the sake of science?

Sometimes in order to test the cure for any disease, scientists have had to recruit a group of volunteers ready to put their lives in danger for the greater good. Of course, most subjects pay some financial compensation for possib...

What would happen if the Earth will be 2°C warmer?

If the world will become warmer by two degrees Celsius, we are doomed. To prevent this, the UN signed the Paris agreement, the international Treaty under which the signatories will attempt to maintain the average global temperatur...

Waiting for "robot of Rembrandt": when machines will start to do really?

Cellist Jan Vogler believed that art makes us human. But what if machines, too, will begin to create art? Above, for example, you can see that they were able to create all sorts of artificial intelligences in a creative field. The...

Can the dark matter to produce "dark life"?

the Vast majority of mass in our Universe is invisible. And for quite a long time physicists are trying to understand what is this elusive mass. If it consists of particles, it is hoped that the Large hadron Collider will be able ...

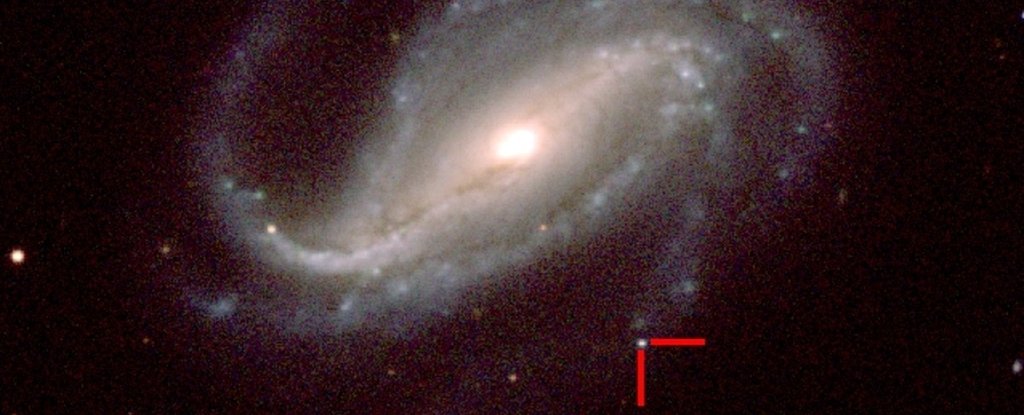

Amateur astronomer first ever got a picture of the appearance of a supernova

it would Seem, what only well-documented facts of cosmic phenomena have not yet to science. However, in space there are many interesting things that scientists know, but nonetheless never seen in person. It also turns out that a w...

Red wine can prevent teeth and gum disease

Between the supporters of a healthy lifestyle and Amateurs skip dinner a couple of glasses constantly stimulating debates about who is right and who is deeply mistaken. Despite the fact that alcohol in large quantities is definite...

the Study of several tens of galaxies within several billion light years from our own, allowed to open a few black holes, which greatly exceeds our expectations about how big they can grow. A recent study not only helps us better ...

Artificial intelligence from Google will instantly detect a heart attack

it would Seem that the new can come up in the field of methods of diagnosis of diseases of the heart, because all is already invented – know yourself improve existing methods! However, researchers from the biomedical company Veril...

We can change our own biology. But is this society?

the Improvement of our own biology may seem like something out of science fiction category, but attempts to improve humanity, in fact, were made thousands of years ago. Every day we improve ourselves with laborious exercise, medit...

Starfish can see in the dark (and not only)

If you're lucky and once you are on the ocean, you will see sea stars. They come in all sizes and colors, but have you ever wondered how it megalopae or multi-armed creature manages to live in the oceans, remaining so different fr...

Plants colonized Earth for 100 million years earlier than expected

during the first four billion of its existence, our planet was devoid of any life, except microbes. The situation changed radically at the moment when the Earth began to green up , which created a favorable environment for the col...

SpaceX received permission to launch a very valuable cargo

we are Talking about a new space telescope, TESS, permission to run which with the help of rocket Falcon 9, the company Ilona Mask received from the National Directorate of the USA on Aeronautics and space research (NASA). In addi...

Could the Universe be conscious?

Over the past 40 years, scientists gradually opened a strange fact about our Universe: its laws of physics and the initial conditions of the Universe are perfectly tuned in order for life got a chance to develop. It turns out that...

Comments (0)

This article has no comment, be the first!