Does our brain deep learning for understanding the world?

Source:

Source:Immediately when Dr. Blake Richards heard about deep learning, he realized that he was faced not only with a method that revolutionairy artificial intelligence. He realized that looking at something fundamental from the human brain. It was the beginning of the 2000s, and Richards conducted a course at the University of Toronto along with Geoff Hinton. To Hinton, who was one of the originators of the algorithm, conquer the world, offered to read introduction to his method of teaching inspired by the human brain.

The Key words here are "inspired by the brain." Despite the conviction of Richards, bet played against him. The human brain, as it turned out, has important functions, which is programmable in the algorithms of deep learning. On the surface these algorithms violate basic biological facts has already been proven by neuroscientists.

But what if deep learning and the brain actually compatible?

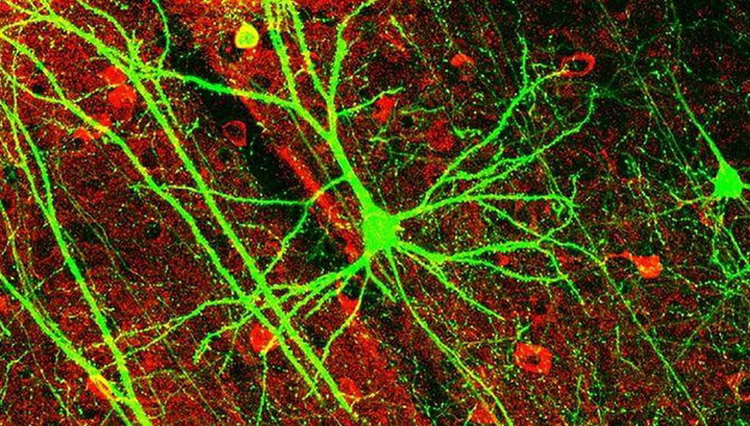

And now, in a new study published in eLife, Richards, working with DeepMind, proposed a new algorithm based on the biological structure of neurons in the neocortex. The cortex, the cerebral cortex, is home to the highest cognitive functions such as reasoning, predicting and flexible thinking.

The Team joined the artificial neurons in a layered network, and set to it the task of the classical computer vision is to identify handwritten digits.

The New algorithm did well. But what is more important: he analyzed the samples for training, as do algorithms for deep learning, but was built entirely on the fundamental biology of the brain.

"Deep learning is possible in a biological structure," the scientists concluded.

Because at the moment this model is a computer version, Richards hopes to pass the baton to experimental neuroscientists, which could help you to check whether this algorithm in a real brain.

If Yes, the data can be transferred to computer scientists to develop massively parallel and efficient algorithms that will work on our cars. This is the first step towards the merging of the two fields in the "virtuous circle dance" of discovery and innovation.

thescapegoating

Although you've probably heard that artificial intelligence has recently beaten the best of the best go, you are unlikely to know exactly how the algorithms work based on this artificial intelligence.

In a nutshell, deep learning based on an artificial neural network with a virtual "neurons". As tallest skyscraper, the network is structured in a hierarchy: low-level neurons processing the input, for example, horizontal or vertical bars that form the digit 4, and neurons treated with a high-level abstract aspects of the number 4.

To train the network, you give her examples of what you're looking for. The signal propagates through the network (up the stairs of the building), and each neuron is trying to perceive something fundamental in the work of the Quartet.

As children learn something new, the first network is not fairing very well. She gives everything that, in her opinion, looks like a number four and get in the spirit of Picasso.

But it is the story of learning: the algorithm compares the output with the ideal input and calculates the difference between them (read: mistakes). The error is "back propagated" through the network, teaching each neuron, they say, is not what you are looking for, look better.

After millions of examples and repetitions, the network starts to work perfectly.

The error Signal is extremely important for learning. Without an effective "back-propagation" network will not know which of its neurons are wrong. In search of a scapegoat artificial intelligence improves itself.

The Brain does, too. But how? We have no idea.

theBiological dead end

The Obvious alternative: a solution with deep training is not working.

Back propagation of error is an extremely important function. It requires a certain infrastructure to work properly.

First, each neuron in the network should receive the error notification. But in the brain the neurons are connected only with several partners at the downstream (if not connected). To reverse the spread worked in the brain, neurons at the first levels should perceive the information from the billions of connections in descending channels — and it's biologically impossible.

And while some deep learning algorithms adapt the local form of error back-propagation is essentially between neurons — it requires that the connection is forward and backward is symmetrical. In the synapses of the brain this does not happen almost never.

More modern algorithms adapt a different strategy, implementing a separate feedback path, which helps neurons find errors locally. Although it is more feasible biologically, the brain doesn't have a separate network dedicated to the search for scapegoats.

But it also has neurons with complex structures, in contrast to the homogeneous "balls", which are currently used in deep learning.

theBranching network

Scientists draw inspiration from pyramidal cells that populate the cortex of the human brain.

"Most of these neurons are shaped like trees, their roots go deep into the brain, and "the branches" come to the surface," says Richards. "Interestingly, the roots get some input sets and branches the others."

Curious, but the structure of neurons often is "exactly how to" for efficiently solving computational problems. Take, for example, processing of sensations: the bottom of the pyramidal neurons are located where you need, to get touch input, and the tops are well-positioned for transmission errors through feedback.

Can this complex structure to be an evolutionary solution to combat the erroneous signal?

Scientists have created a multilayered neural network based on the previous algorithms. But instead of homogeneous neurons they resemble the neurons of the middle layers sandwiched between the input and output — similar to the real thing. Learning handwritten digits, the algorithm proved to be much better than the single network, despite the absence of classical error back-propagation. The cell structure was able to identify the error. Then, at the right time, the neuron combines both a source of information for finding the best solutions.

This has a biological basis: neuroscientists have long known that the input branches of the neuron carry out local calculations that can be integrated with signal back-propagation from the output branches. But we don't know whether the brain works in reality — therefore, Richards instructed the neuroscientists to find out.

Moreover, this network handles a problem similar to the traditional method of deep learning to read: uses a multilayer structure to extract progressively more abstract ideas about each number.

"It is a feature of deep learning," explain the authors.

theDeep brain learning

Without a doubt, this story will be more twists and turns because computer scientists make more and more biological detail in the algorithms AI. Richards and his team consider the predictive function from the top down, when the signals from higher levels directly affect how lower levels respond to the input.

Feedback from the upper levels not only improves the signalling of errors; it also can encourage neurons to low-level processing to work "better" in real time, says Richards. Until the network has surpassed other non-biological networks deep learning. But it does not matter.

"Deep learning has had a huge impact on AI, but to date its impact on the neuroscience was limited," say the authors of the study. Now neuroscientists will have the occasion to conduct a pilot test and learn is the structure of the neurons in the base of the natural algorithm of deep learning. Perhaps in the next ten years will begin a mutually beneficial data exchange between neuroscientists and researchers in artificial intelligence.

...Recommended

Can genes create the perfect diet for you?

Diet on genotype can be a way out for many, but it still has a lot of questions Don't know what to do to lose weight? DNA tests promise to help you with this. They will be able to develop the most individual diet, because for this they will use the m...

How many extraterrestrial civilizations can exist nearby?

If aliens exist, why don't we "hear" them? In the 12th episode of Cosmos, which aired on December 14, 1980, co-author and host Carl Sagan introduced viewers to the same equation of astronomer Frank Drake. Using it, he calculated the potential number ...

Why does the most poisonous plant in the world cause severe pain?

The pain caused to humans by the Gimpi-gympie plant can drive him crazy Many people consider Australia a very dangerous place full of poisonous creatures. And this is a perfectly correct idea, because this continent literally wants to kill everyone w...

Related News

Edit the genes slowed the development of amyotrophic lateral sclerosis in mice

Considered incurable degenerative disease called amyotrophic lateral sclerosis (also known as Lou Gehrig's disease and Lou Gehrig's disease) managed to slow down by editing genes in laboratory mice. For the first time in people wi...

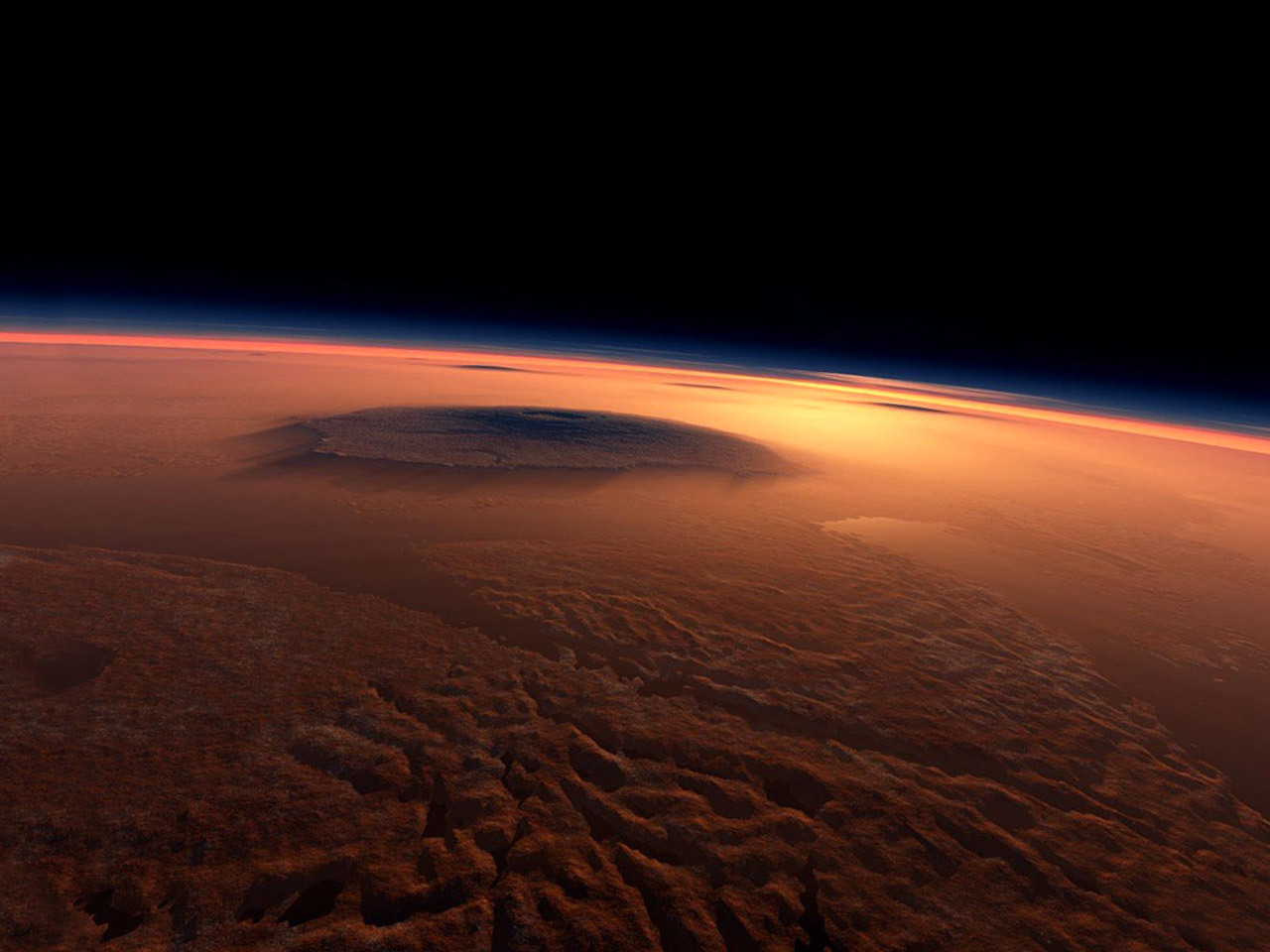

Where was the water from Mars? Scientists have a new hypothesis

Planetary scientists believe that billions of years ago Mars was warmer and wetter than it is now. Where was his water? In the new study, researchers impose the assumption that most of the water is still on the red planet, only it...

The solar system could have formed inside a giant space bubble

There are different theories about how it may have formed our Solar system. But at the moment scientists have not yet come to a common agreement and a model that could explain all the peculiarities and oddities associated with it....

The Arecibo Observatory is considered a potentially hazardous asteroid Phaeton

After a few months of downtime in connection with the liquidation of consequences of hurricane "Maria" the main radio telescope of the Arecibo Observatory and one of the most powerful radio telescopes in the world returned to its ...

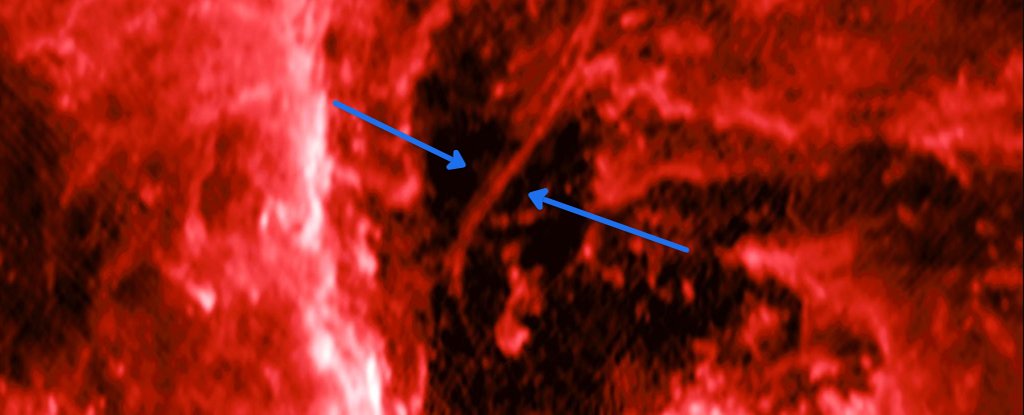

Near the center of the milky Way discovered a strange "thread"

Astronomers have been exploring the center of the milky Way, which is a supermassive black hole Sagittarius A* at the mass, surpassing our Sun in 4 million times. But thanks to technology, scientists have new tools for observation...

The classical picture of the neurons in the brain was wrong

the Human brain contains about 86 billion neurons. Each of these neurons connect with other cells, forming trillions of connections. The place of contact of two neurons or a neuron and a signal-receiving cells is called the synaps...

Alien hunter was skeptical of the latest "revelations" of the Pentagon

last week the publisher of The New York Times and Politico have published articles in which it was reported that the us government within a few years led funding programmes to study . Objective of the "Advanced program identify av...

For people with Parkinson's disease have developed "laser shoes"

Modern technology quite often come to the rescue of the people, especially when it comes to improving people with various incurable diseases. Such as . The phrase "laser shoes" may sounds a bit ridiculous, but how else can you cal...

Our brain is able to create false memories, but it's not always a bad thing

You've never been in a situation when together with someone witnessed an event, but somehow different then I remembered, what happened? It would seem that you were there, saw the same thing, but for some reason have differing memo...

Scientists say the discovery of the gene bad breath

Bad breath is not always associated with noncompliance with oral hygiene. Scientists say that in about 0.5 to 3 percent of the people cause bad smell are other sources. For example, a bad smell occurs when inflammation of the sinu...

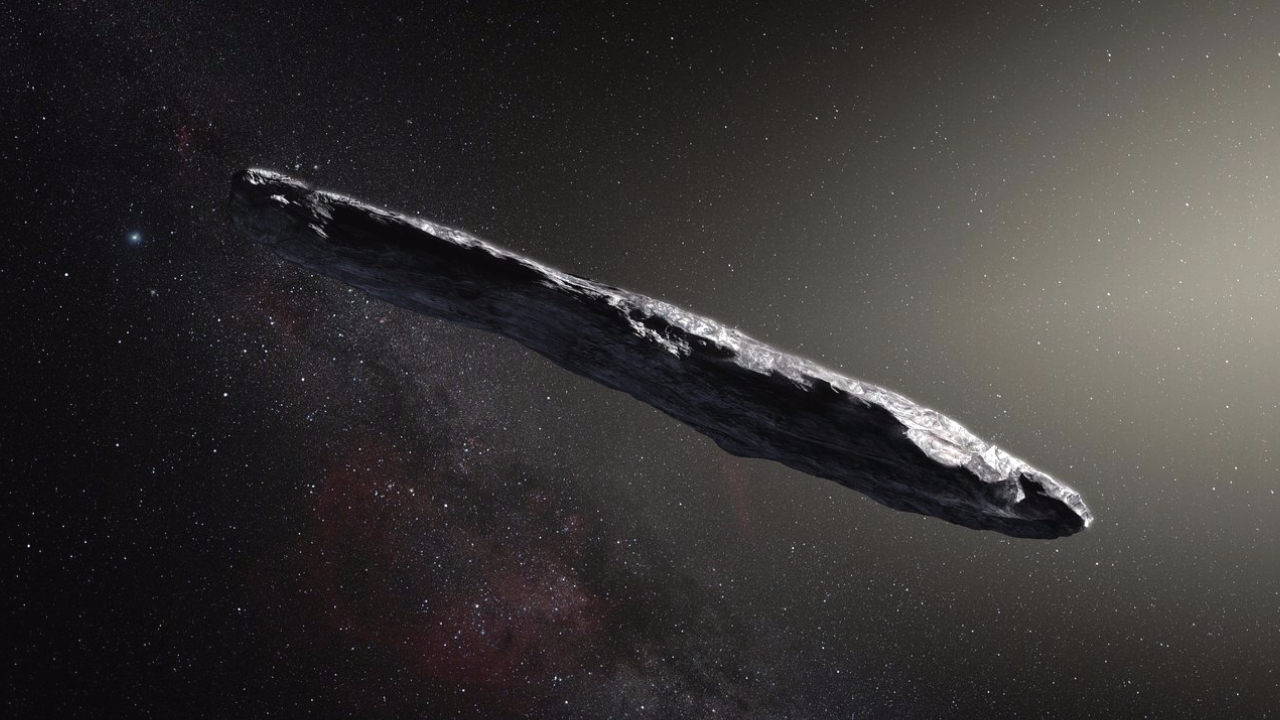

Omwamwi is not a space ship, but he could be "passengers"

last Wednesday, scientists project a Breakthrough Listen directed gaze parabolic radio telescope green Bank National radio astronomy Observatory in the direction Omwamwi — the mysterious oblong space object, in fact the firs...

Testers Virgin Hyperloop One broke up the capsule to 387 km/h

a Team of testers Virgin Hyperloop One again set a record — in the recent tests held at the site DevLoop near Las Vegas, they were able to accelerate the capsule to a speed of 387 kilometers per hour. the Inside of the pip...

Come in large numbers here! Mars is not a close neighbor of the Earth

an international group of scientists could find the causes of the deep differences in the composition of Earth and Mars. The fact that the Red planet was not always where it is now located, but much further away from our planet in...

Japanese scientists have created an "unbreakable" glass

Perhaps one of the most common breakdowns associated with smartphones, is cracked as a result of severe impact or fall of the screen. Engineers for many years trying to solve this problem, developing new protective coatings and mo...

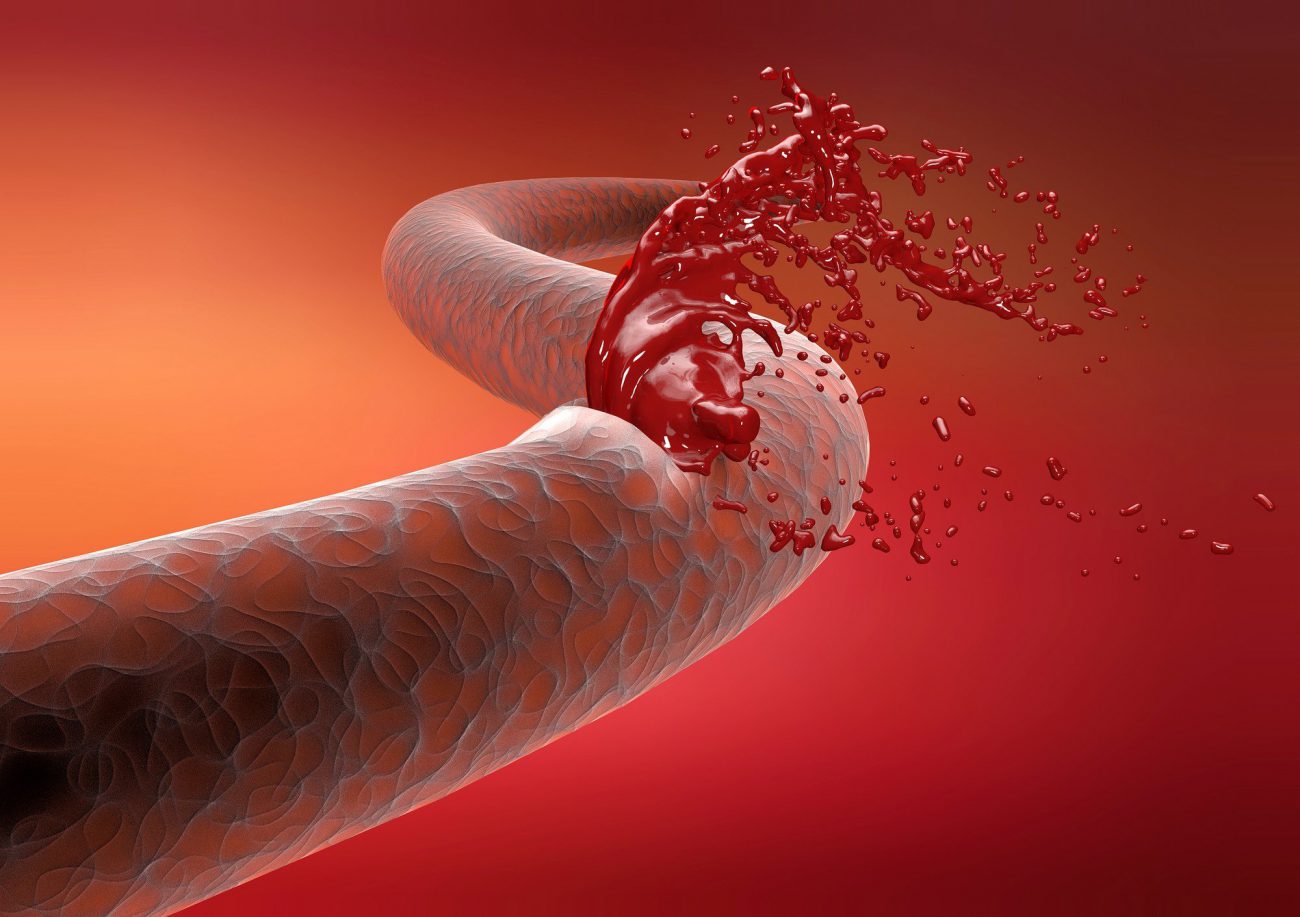

British doctors experienced new cure hemophilia

Hemophilia — very dangerous disease associated with the violation of the processes of blood coagulation. In hemophilia the bleeding from a person does not stop, even for minor injuries. At this point the disease is considere...

While genes do affect intelligence, we cannot improve the mind

"first, let me tell you how smart I am. So. Fifth grade math teacher said I was clever at maths and, looking back, I have to admit that she was right. I can tell you that time exists, but it cannot be integrated into the fundament...

Intel offers a way of decoding DNA with the help of mining

Intel reports that recently received a patent on the technology of capacity utilization of the mining equipment for the processing of genetic data. The authors of the idea of Ned Smith and Rajesh Purnachandra in the explanatory Me...

AI Goolge begins to find hidden treasures in the data telescope "Kepler"

Through the use of neural networks Google space Agency NASA discovered the eighth planet, it would seem, has studied the sun-like star Kepler-90, located in 2545 light years from us. To find a "lost" managed in a database of the s...

Mars Rover "opportunity" has survived eight winters on the red planet

the Initial estimated operation time landed on the Red planet on 25 January 2004 the Mars Rover "opportunity" was about 90 earth days. However, the little robot has surpassed all expectations and is working on Mars for 13 years an...

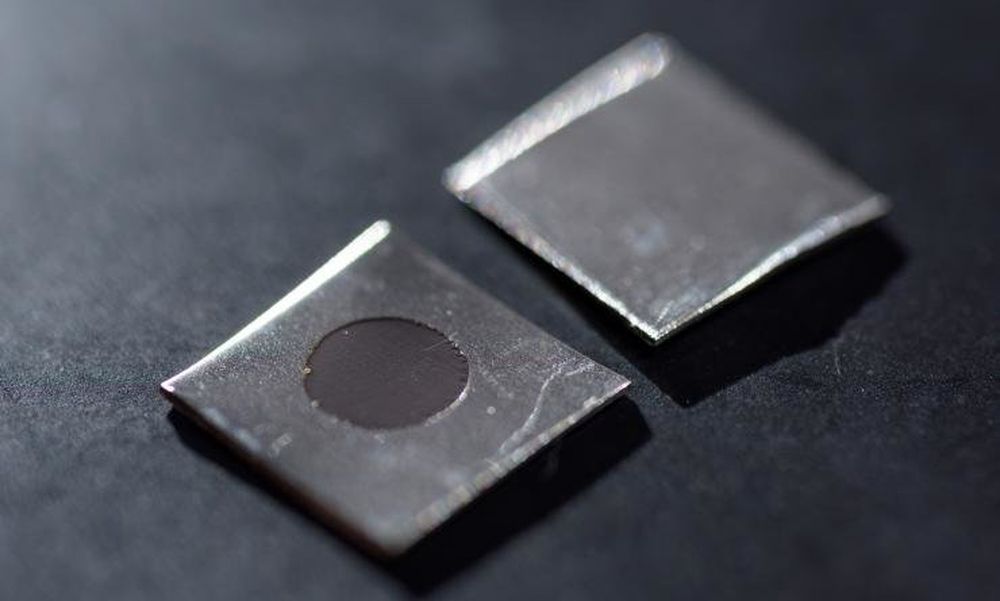

Scientists have created a metal coating destroys bacteria

a Team of researchers from Georgia Institute of Technology through a process of electrochemical etching created on the surface of the stainless steel alloy nano coating (texture of the tiny protruding spikes), kill bacteria, while...

Comments (0)

This article has no comment, be the first!